OpenClaw and the Evolution of Agentic Security: A New Approach to Sandboxing

OpenClaw and the Evolution of Agentic Security: A New Approach to Sandboxing

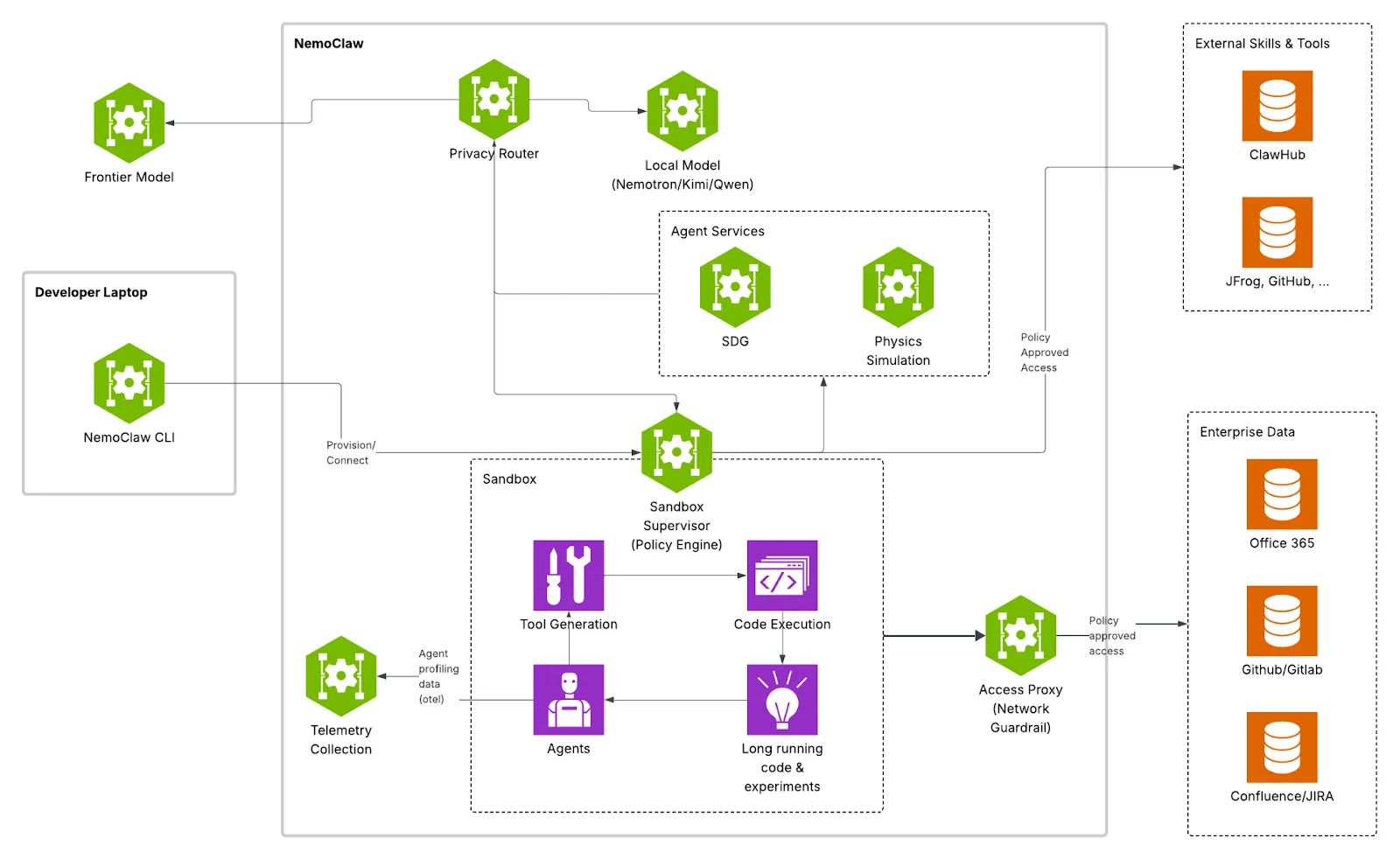

The open-source agentic automation tool, recently under fire due to security concerns surrounding its decision-making autonomy, has found a new operational framework thanks to a dedicated stack introduced by NVIDIA. This integration provides the system with additional security layers and optimizations, allowing even less experienced users to deploy it within a secure, controlled sandbox.

The News

The recent release of NemoClaw and OpenShell introduces an open-source stack designed to enclose OpenClaw within an isolated environment.

This runtime was built to allow developers to safely deploy autonomous AI agents, known as "claws." The primary technological goal is to harness the massive productivity of these self-evolving agents while maintaining strict control over corporate data and privacy.

OpenShell aims to overcome the limitations of traditional control systems by introducing structural security primitives. This approach ensures the agent has access to the tools and data it needs to work, mitigating the risk of compromising the host system.

At the core of this architecture is "out-of-process" policy enforcement. Unlike current solutions that embed guardrails and system prompts within the agent itself—rendering them ineffective if the AI is compromised—OpenShell acts as an external layer of governance. Each session is isolated in specific containers, and permissions are verified by the policy engine in real-time, making it physically impossible for the agent to bypass infrastructure-level restrictions.

As of today, the ecosystem is entirely open-source. A technologically significant aspect, marking a strong departure from the traditionally closed CUDA ecosystem, is that the orchestration infrastructure is not tied to NVIDIA hardware. The platform allows agents to be managed on machines without proprietary GPUs, drastically expanding enterprise adoption possibilities (though proprietary hardware is still required for full local acceleration).

The deployment approach is designed to lower the barrier to entry. Developers can launch the isolated environment with a single command-line instruction (e.g., openshell sandbox create), without having to modify a single line of their AI agents' source code.

TLDR;

March 16, 2026, marked the debut of NemoClaw and OpenShell, an open-source ecosystem that represents a major step forward in operating autonomous AI agents securely. Until now, leaving an AI to work unattended meant exposing infrastructure to considerable risks. This technology tackles the problem at its root by creating a virtual sandbox with strict rules managed outside the AI. This approach allows the agent to self-evolve, write code, and complete complex tasks while curbing any ability to exfiltrate sensitive data or compromise the host system.

The Open-Source Ecosystem for Autonomous AI

Imagine having a tireless digital assistant you can entrust with an entire project, leaving it to work autonomously for days. Until recently, granting such freedom meant risking accidental deletions or the exposure of private documents. With this new architecture, developers get a true secure playroom: the AI has all the tools it needs to learn and produce, but operates in an environment where it physically cannot cause external harm.

For industry professionals, this is a significant paradigm shift in deployment. The added value lies in its ease of implementation: a single terminal command boots up the infrastructure, which scales identically whether running on local workstations or complex enterprise clusters.

Overcoming the Boundary Between Autonomy and Security

When we delegate a task to a human, we trust their judgment; with traditional software, we trust static code. Modern AI agents, however, are inherently unpredictable and constantly evolving. This capability has historically made it difficult to balance efficiency with security. You either locked the AI down, forcing the user to approve every single action, or you gave it carte blanche and accepted the risk. Today, that compromise is no longer necessary: the agent receives the operational freedom it needs within an impenetrable architectural perimeter.

Under the hood, the infrastructure neutralizes critical cyber threats. An agent with persistent shell access is a massive attack surface where a simple prompt injection can turn into a corporate disaster. The new architecture shifts control from algorithmic "good behavior" to the host system's infrastructure constraints, preventing unintended privilege escalation.

The "Out-of-Process" Approach: Ironclad Security

Think about how a bank vault works. If the security checks were managed by the exact same person trying to withdraw the money, the system would fail. Until now, AI "guardrails" were written into their own logical models; if the AI was tricked, those guardrails collapsed. The leap forward in this architecture lies in moving the locks to the outside: no matter how much the agent is manipulated, the rules are managed by an external, incorruptible abstraction layer.

Technically, this is "out-of-process policy enforcement." While popular tools rely on internal system prompts, OpenShell wraps the agent in a governance layer completely separate from the LLM process. Sessions are isolated at the kernel level, and permissions are verified in milliseconds before an action executes at the OS level.

Advanced Sandboxing and Granular Control

When you download a smartphone app, the OS warns you if it tries to access your camera. The new stack acts on an exponentially deeper level. If the AI tries to download a package from the internet, the network intervenes, analyzing the binary and its destination. This way, the AI can acquire new skills, but the risk of installing malicious software is neutralized by the policy engine.

These aren't just generic Docker containers; this is a purpose-built engine for LLM dynamics, operating simultaneously at the file system, network, and process levels. If an agent attempts to call an unauthorized API, the request is blocked—but the system allows the agent to "reason" about the block and propose a targeted policy update to the human developer.

Smart Privacy and Total Flexibility

Companies are often hesitant to share industrial secrets with cloud-connected AIs. To solve this limitation, the system introduces a privacy "traffic cop."

The integrated privacy router routes workloads based strictly on corporate directives, not the agent's preferences. It can dynamically direct requests to locally hosted models for sensitive data and route only generic queries to external frontier models. This optimizes resources and keeps confidential information locked down. Furthermore, the entire stack is model-agnostic: teams can integrate it into any existing workflow without rewriting their agents' source code.