OpenAI Releases GPT-5.4

OpenAI Releases GPT-5.4

The new state-of-the-art model has been released, bringing performance improvements with fewer interactions and becoming the new go-to standard for both everyday tasks and complex coding activities.

What's New

OpenAI has officially released GPT-5.4, now available via ChatGPT, the API, and Codex.

The new model promises reduced end-to-end latency for multi-step agentic calls, lower context usage for cost optimization, and a better understanding of instructions and codebases. This significantly reduces the need for prompt fine-tuning and strict system instructions.

According to the official documentation, besides replacing gpt-5.2 as the default model in the web interface and gpt-5.3-codex on Codex, gpt-5.4 is also the new default choice for API usage.

Search capabilities through tool_search have been optimized to retrieve only essential information, further refining the model's accuracy in tool selection based on the provided instructions.

Among the new features, aligning with competing models, GPT-5.4 expands its context window to 1 million tokens (with separate usage-based pricing, available here). It also introduces built-in Computer Use—the ability to interact directly with software through the UI—to execute development tasks following a build-run-verify-fix pattern, alongside automatic context compaction to optimize coding sessions.

TL;DR

The New Model

GPT-5.4 is OpenAI's new flagship model, designed to cover a wide spectrum ranging from complex tasks to quick, everyday operations.

On ChatGPT, it is now the default model for new conversations. As with its predecessors, users still have the option to select the Pro mode to leverage advanced reasoning capabilities and solve more complex problems.

On the API front, four different versions are available:

| Variant | Use Case |

gpt-5.4 | General tasks, complex reasoning, general knowledge, and multi-step agentic operations. |

gpt-5.4-pro | Tough problems that may take longer to solve and need deeper reasoning. |

gpt-5-mini | Cost-optimized reasoning and chat; balances speed, cost, and capability. |

gpt-5-nano | High-throughput tasks, especially straightforward instruction-following or classification. |

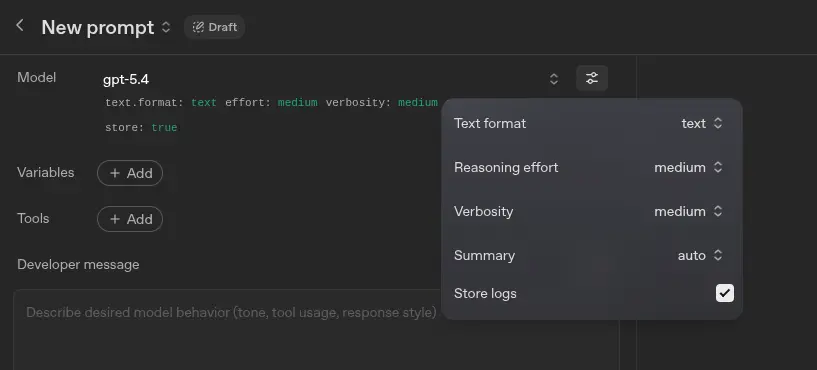

Regarding configuration options, similarly to previous models, developers can adjust the reasoning effort and set the response verbosity (consequently limiting token usage) at both the system instruction and user prompt levels. This parameter directly affects output quality: for a coding task, reduced verbosity yields more compact code; conversely, giving the model more leeway will result in highly structured and heavily commented code.

1M-Token Context Window

The introduction of such a massive context window allows GPT-5.4 to operate with much more generous limits, ingesting a larger volume of task-related information (e.g., highly detailed instructions, extensive documentation, and massive codebases).

While there are no official announcements regarding specific subscription tiers required to unlock this capacity, a custom configuration is recommended when using it via Codex. Specifically, developers need to tweak the model_context_window and model_auto_compact_token_limit parameters, keeping in mind that this remains an experimental feature.

Tool Usage

The new model features a built-in Computer Use tool, a fundamental capability that allows the AI to interact directly with graphical interfaces for tasks requiring specific operations (e.g., browsing the web).

This use case is particularly handy for workflows where completing a task involves filling out web forms or analyzing screenshots to spot visual bugs.

This repository provides some sample scenarios and a demo app showcasing how to integrate the Computer Use tool into an automated workflow.

Migration Guide

Although gpt-5.4 is an almost universal drop-in replacement for previous flagship models, there are a few dynamics to watch out for when migrating from older versions.

Generally, OpenAI suggests starting by adjusting the reasoning effort to find an output baseline that matches past results. There is also a Prompt Optimizer available (requires an OpenAI account) that automatically tailors legacy prompts to the internal rules of version 5.4.

Here is a quick reference guide for adaptation:

- gpt-5.2:

gpt-5.4with default settings is a sufficient direct replacement. - o3:

gpt-5.4with reasoning set tomediumorhigh. It's recommended to test onmediumfirst and switch tohighonly if the results don't fully meet expectations. - gpt-4.1:

gpt-5.4with reasoning disabled (none). Starting fromnone, it's advisable to optimize prompts for the new model; if that's not enough, progressively increase the reasoning effort. - o4-mini or gpt-4.1-mini:

gpt-5-minicoupled with good prompt tuning is a highly effective replacement. - gpt-4.1-nano:

gpt-5-nanowith prompt tuning ensures a smooth transition.