Google Monthly Build Updates: March 2026

Google Monthly Build Updates: March 2026

Welcome back to our regular roundup of the latest updates from the Google Developer Program Review. Front and center this month are the new Gemini 3.1 model, the WebMCP preview, and the introduction of highly precise documentation accessible directly via API and an MCP server.

Google Gemini 3.1

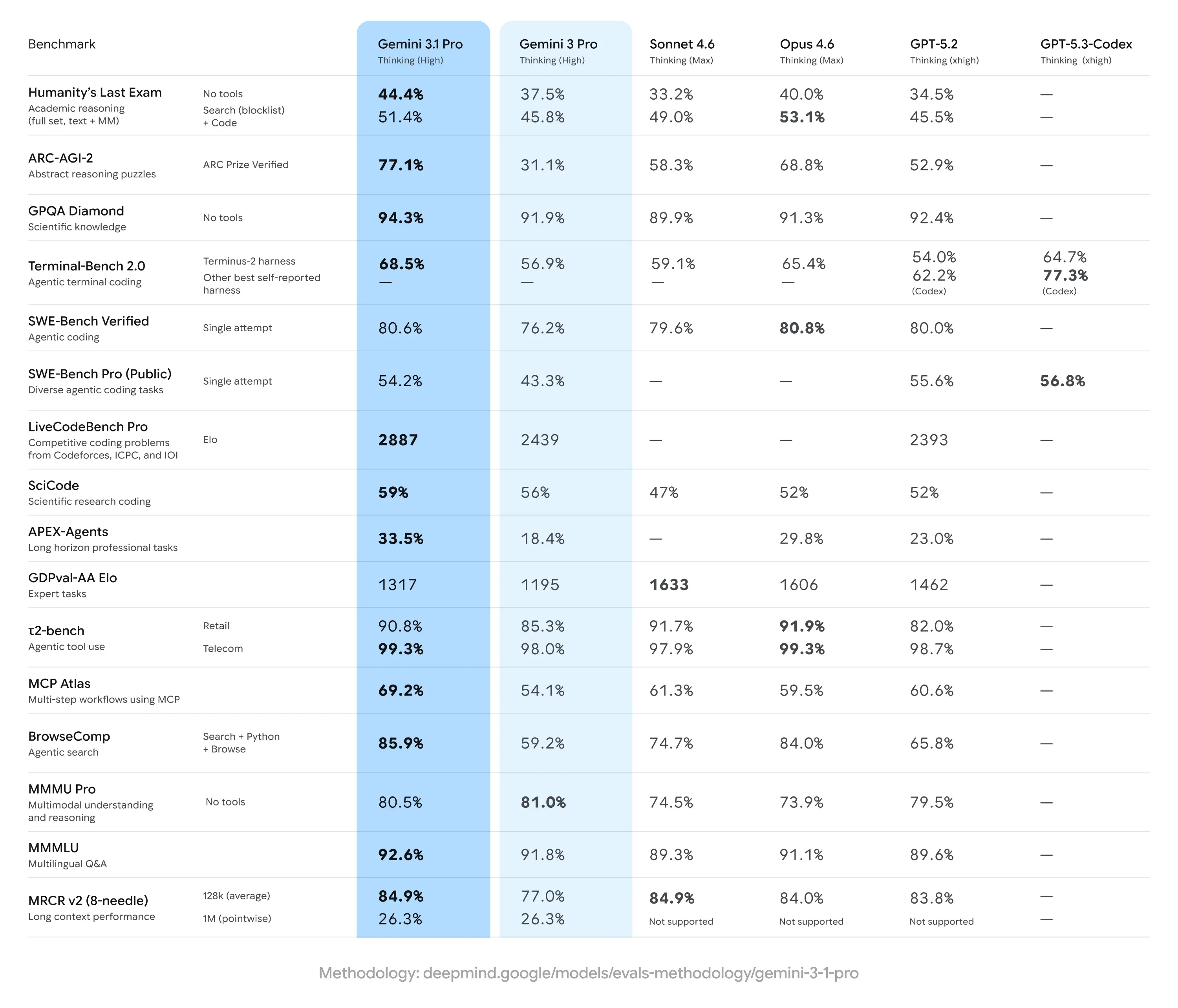

Following the recent Deep Thinking mode update, the Gemini model lineup takes another leap forward with the architecture that made this advanced reasoning possible: the Gemini 3.1 Pro model.

In addition to boasting refined logical capabilities and a clear breakthrough in complex problem-solving, the 3.1 Pro version achieves a verified score of 77.1% on the ARC-AGI-2 benchmark. This test is specifically designed to evaluate a model's ability to solve entirely novel logical patterns.

TL;DR

The applied intelligence of the new model translates into highly impactful, practical features, especially in technical and creative fields. A standout addition is code-based animation: from a simple text prompt, the model can generate animated SVG graphics ready to be embedded seamlessly into a website.

Because they are built with pure code rather than pixels, these animations remain crisp at any scaling level and maintain an extremely lightweight file size compared to traditional video formats. This is paired with an advanced synthesis capability for complex systems, allowing developers to connect intricate APIs to intuitive user interfaces (such as setting up live dashboards powered by public telemetry streams).

Currently, Google is rolling out Gemini 3.1 Pro in preview to continue fine-tuning agentic workflows ahead of general availability. Developers can access the model via the Gemini API in Google AI Studio, the Gemini CLI, Android Studio, and the new agentic development platform, Google Antigravity. Enterprises can integrate it through Vertex AI and Gemini Enterprise. For consumers, the model is rolling out in the Gemini app and NotebookLM, with exclusive access or higher usage limits for those on the premium Google AI Pro and Ultra plans.

Developer Knowledge API and Documentation via MCP Server

With the rapid expansion of AI-driven development tools, such as agentic platforms and command-line interfaces, there is a crucial need to provide these models with access to consistently accurate and up-to-date technical documentation.

Since Large Language Models rely heavily on the context they receive, developers working with Google technologies need their virtual assistants to know the latest Firebase features, recent Android API changes, and current Google Cloud best practices. To meet this need, Google has announced the public preview of the Developer Knowledge API and its accompanying Model Context Protocol (MCP) server. This ecosystem is compatible with tools like Gemini CLI, Gemini Code Assist, Claude Code, and GitHub Copilot, creating a direct, machine-readable bridge to the official documentation.

TL;DR

The Developer Knowledge API is designed to act as a programmatic single source of truth for all public Google documentation. Instead of relying on potentially outdated training data or brittle web-scraping systems, developers can now search and retrieve documentation pages directly in Markdown format.

[Multimedia suggestion: Insert an IDE screenshot showing the AI assistant fetching and formatting a code block directly from the official Google documentation via MCP.]

Alongside the API is the release of an official MCP server. By connecting this server to their Integrated Development Environment (IDE) or AI assistant, developers give the system the ability to "read" and understand the documentation. This results in significantly more reliable support: the AI can provide practical implementation guides, targeted troubleshooting for complex errors, and detailed comparative analyses of different services for specific use cases.

To get started with these tools (currently in public preview), developers need to generate a dedicated API key within their Google Cloud project. Next, they must enable the MCP server via the Google Cloud CLI (by running the command gcloud beta services mcp enable developerknowledge.googleapis.com) and update their tool's configuration file.

While the current release focuses on providing high-quality unstructured Markdown text, the goal for general availability is to introduce support for structured content, such as specific code blocks and direct references to API entities. Further expansion of the documentation corpus and rapid content re-indexing to reduce latency are also on the roadmap.

The full documentation is available on the official MCP page.

WebMCP

Announced in preview via the Early Preview Program (EPP), WebMCP is a new standard designed to streamline the interaction between websites and AI agents. As the web evolves toward an increasingly "agentic" nature, this tool aims to provide a standardized method for exposing structured functions directly from web pages.

This way, site owners can define exactly how and where virtual assistants should operate within their platforms. The goal is to ensure that complex actions—such as booking a flight, filing a support ticket, or navigating intricate data—are executed with greater speed, precision, and reliability.

TL;DR

To make websites "agent-ready," WebMCP introduces two new types of APIs that overcome the limitations and brittleness of traditional direct DOM actuation (which often relies on complex visual scraping processes):

- Declarative API: Designed to let the AI perform standard actions that can be defined directly within HTML forms.

- Imperative API: Built to handle more complex and dynamic interactions that require JavaScript execution.

This direct communication channel eliminates ambiguity, creating a robust bridge between the site and the browser agent acting on the user's behalf.

The highlighted use cases underscore the potential of this technology across several key sectors:

- Customer Support: Agents could automatically fill out support tickets, accurately inputting all necessary technical details.

- E-commerce: The shopping experience would be streamlined by allowing the AI to easily find products, configure specific shopping options, and navigate checkout procedures flawlessly.

- Travel: Users could delegate the search, filtering, and booking of ideal flights to the agent, using structured data to ensure consistently accurate and seamless results.