Cognitive Debt in the Name of Productivity

Cognitive Debt in the Name of Productivity

When various business leaders (or individual professionals) find themselves having to honor promises made to investors or clients by guaranteeing higher productivity, adopting AI in production processes becomes an essential factor—one that, however, ends up amplifying pre-existing issues.

What's the News

Research conducted by two researchers from the Haas School of Business at UC Berkeley, reported by Harvard Business Review, has shown how the daily use of LLMs is changing our brains and work habits, debunking the myth that AI will grant us more free time.

Although the study focuses on work intensification, this research, along with many others, has led the scientific narrative to shift its focus toward the concept of "Cognitive Debt."

The conversation centers on how immediate productivity, while helpful for gaining a short-term advantage, is increasingly compromising our ability to make long-term decisions.

The research lasted 8 months (April - December 2025) and focused on a sample of 200 employees at an American tech company. These employees were provided with various AI tools: each could decide when, if, and how to use them to tackle their daily tasks.

The result was clear-cut: researchers observed that employees worked at faster paces, took on a broader range of assignments (often filling in for colleagues or other professionals), and ended up extending their workday without any explicit request from the company or clients.

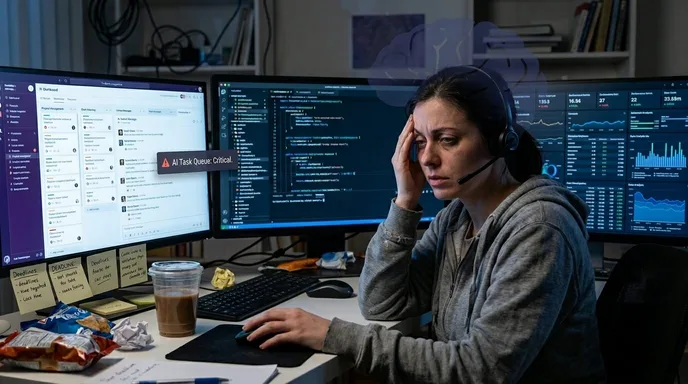

This phenomenon manifested through task expansion, the blurring of boundaries between work and private life (the so-called "work snacks", i.e., continuous interactions with AI even during breaks or in the evening), and an explosion of multitasking, leading to cognitive overload.

TLDR;

The study conducted by the UC Berkeley researchers highlights overproduction and the total encroachment of the work cycle onto free time.

The study is based on direct observation over eight months, between April and December 2025, involving two hundred employees of a US technology company. The researchers adopted a highly in-depth field approach, combining physical presence in the offices with the monitoring of internal communication channels and conducting over forty interviews across key departments such as engineering, design, research, product, and operations.

The results show a clear behavioral paradox: the introduction of artificial intelligence tools did not lighten daily duties; rather, it drastically intensified them. Having AI at their disposal, employees began working at a much more relentless pace, autonomously expanding the scope of their tasks and extending their working hours without any explicit directive. Artificial intelligence created the illusion of having an always-available partner, pushing people to manage multiple operational workflows in parallel. Employees started retrieving old, previously shelved projects and taking on tasks they would have delegated to others or avoided entirely in the past.

This initial feeling of momentum and extreme productivity, however, revealed negative long-term consequences. The constant attention-switching between different activities, combined with the need to constantly verify the results generated by the algorithms, created a significant cognitive overload. What initially appeared to be enhanced efficiency turned into a progressive creep of work expectations, leading to mental fatigue, burnout, and a severe weakening of decision-making clarity. New forms of "invisible" work also emerged, such as the extra time engineers spent mentoring and reviewing the AI-generated code of their colleagues, effectively adding further responsibilities instead of removing them.

This research shares some foundations with a study conducted by MIT, which published a paper measuring the exact effect of "cognitive debt" from the intensive use of LLMs. By analyzing the EEG brain scans of professionals while using assistive tools, the MIT team noted a significant drop in neural connectivity in those who systematically used AI to write or produce.

Parallel to this evidence, other contemporary research highlights alarming data regarding the 17 to 25 age bracket. In this group, researchers recorded the highest levels of artificial intelligence dependency and, consequently, the lowest scores in critical thinking tests compared to all other age groups.

Final Thoughts

Several researchers suggest limiting and slowing down the adoption of Artificial Intelligence in education, especially among younger age groups.

As long as this solution doesn't completely inhibit its use, it could be a sensible compromise: let's remember we are talking about a technology that is not going to disappear. Restricting its use a priori could create a technical debt that, especially for younger people, would mean being left behind and becoming less competitive in the job market in the long run.

These analyses led me to another reflection, one that has been tickling my brain since the early months of real AI adoption.

As a developer, I admit I’ve spent my fair share of sleepless nights in front of the PC. This happened both before and after the advent of coding agents, just for two different reasons. While before it was often to solve a particularly complex problem or to catch up on a task I couldn't properly fit into regular working hours, now I find myself swallowed up in a "creative" flow induced precisely by the extra, optimized possibilities we have at our disposal.

I can perfectly understand the behavioral pattern where, faced with greater possibilities, the instinct is to "do more." But researching material for this article, what stuck with me is how, inevitably, work efficiency and mental clarity ended up collapsing at a certain point anyway. And this doesn't just apply to large companies pushing the accelerator; it's a general pattern that cuts across the board, affecting everyone: from the employee, to the startup founder, down to the individual freelance professional.

If you think about it, a healthy workflow should normally be able to handle complexities. The attitude of filling gaps by taking on skills that don't belong to us (perhaps blindly delegating to an LLM parts of the work we haven't mastered just to save time or budget) shouldn't become the norm. If an individual feels forced to act this way to stay afloat, there is a fundamental flaw in the operational workflow or in the very conception of their work.

What I have seen glaringly for a couple of years now is that AI acts as an accelerator not only for productivity, but also for all those pre-existing problems and disorganizations. Instead of being resolved, organizational limits are amplified to a new power, masked by the illusion of being able to do everything, immediately, and in parallel.

Demonizing or inhibiting the use of AI is, in my opinion, completely wrong. But blindly adopting these tools in an environment that is already wobbling—whether it's the entire tech division of a multinational or the mundane time management of a single freelancer—simply means automating and exacerbating a production system that is already on the brink of collapse.

For this reason, even if it pains me to partially agree with the dreamers and mystifiers posting on LinkedIn, it is necessary to have solid critical thinking and a clear strategy upfront: if there isn't a solid organization of work at the foundation, exactly what are we optimizing?